Az Load Balancer (L4) ¶

In AWS terms, its a Network Load Balancer (NLB) as Azure Load Balancer operates at layer 4 of the Open Systems Interconnection (OSI) model. It's the single point of contact for clients.

| OSI Layer | AWS | Azure |

|---|---|---|

| L4 | Network LB | Azure LB |

| L7 | Application LB | App Gateway |

Load balancer distributes inbound flows that arrive at the load balancer's front end to backend pool instances. These flows are according to configured load-balancing rules and health probes. The backend pool instances can be Azure Virtual Machines (VM) or instances in a Virtual Machine Scale Set (VMSS).

Types of LB¶

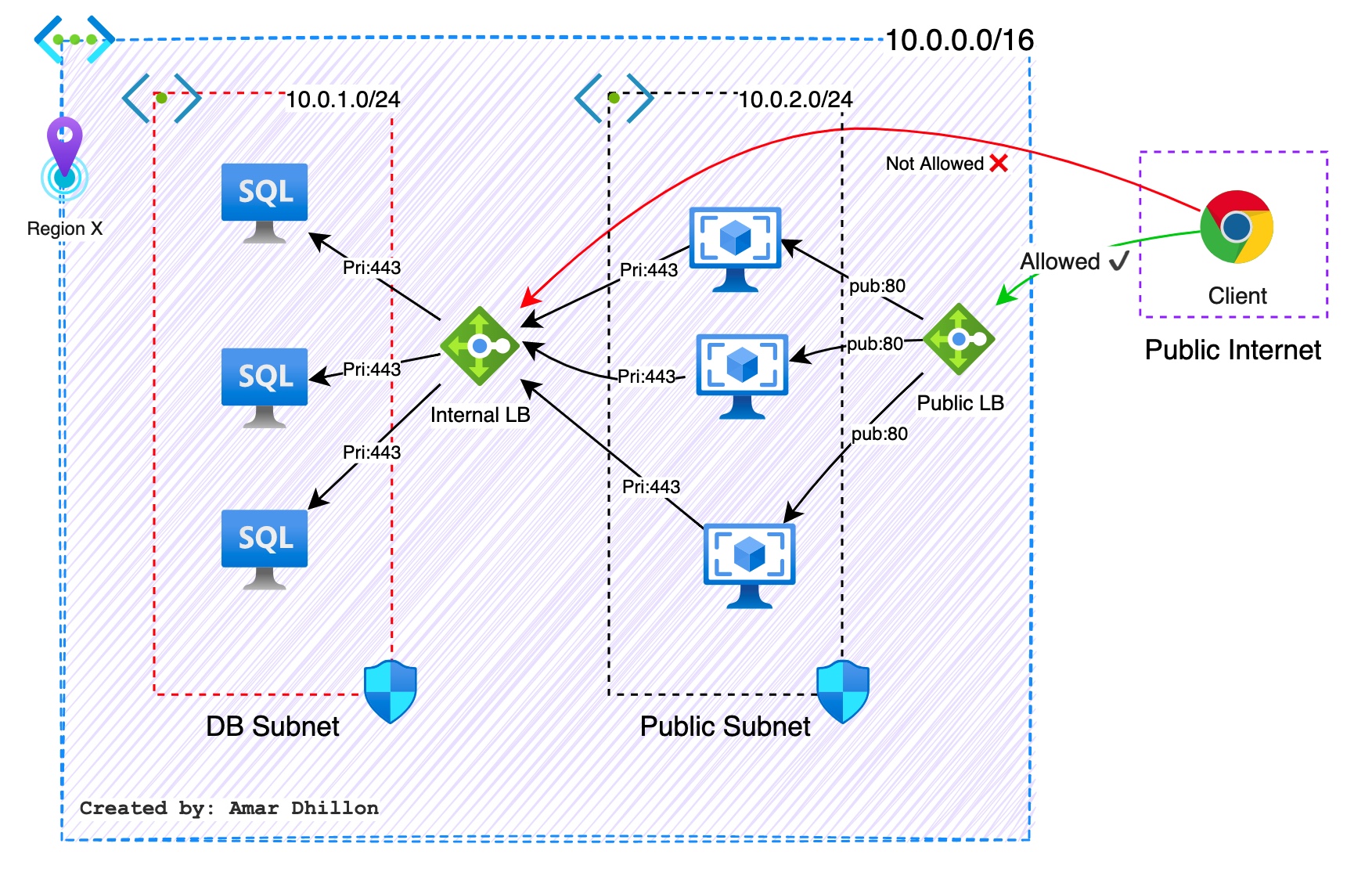

The purpose of a load balancer is to distribute the traffic to the servers after verifying the health of the backend server. Depending upon the placement of the load balancer in the architecture, the load balancer can be categorized as:

-

Public/External LB: As the name suggests, a public load balancer will have apublic IP address, and it will be Internet facing. In a public load balancer, thepublic IP addressand aport numberare mapped to theprivate IP addressandport numberof the VMs that are part of the backend pool. -

Private/Internal LB: There will be scenarios where you want to load balance the requests between resources that are deployed inside a virtual network without exposing any Internet endpoint. For example, this could be a set ofdatabase serversthat will distribute the database requests coming from thefront-end servers. Since the backend database servers cannot be exposed to the Internet, you need to make sure that the load balancer has no public endpoint. Internal load balancers are deployed to distribute the traffic to your backend servers that cannot be exposed to the Internet. The internal load balancer will not have apublic IP addressand will be using the private IP address for all communication. This private IP address can be reached by the resources within the same virtual network, within peered networks, or from on-premises over VPN.

Backend pool¶

The backend pool is a critical component of the load balancer. The backend pool defines the group of resources that will serve traffic for a given load-balancing rule. The backend pool contains the IP address of the network interface cards that are attached to the set of virtual machines or virtual machine scale set

In the Standard SKU, you can have up to 1,000 instances, and the Basic SKU can have up to 100 instances in the backend pool.

There are two ways of configuring a backend pool:

- Network Interface Card (NIC)

- IP address

Floating IP¶

Why floating IP is required?

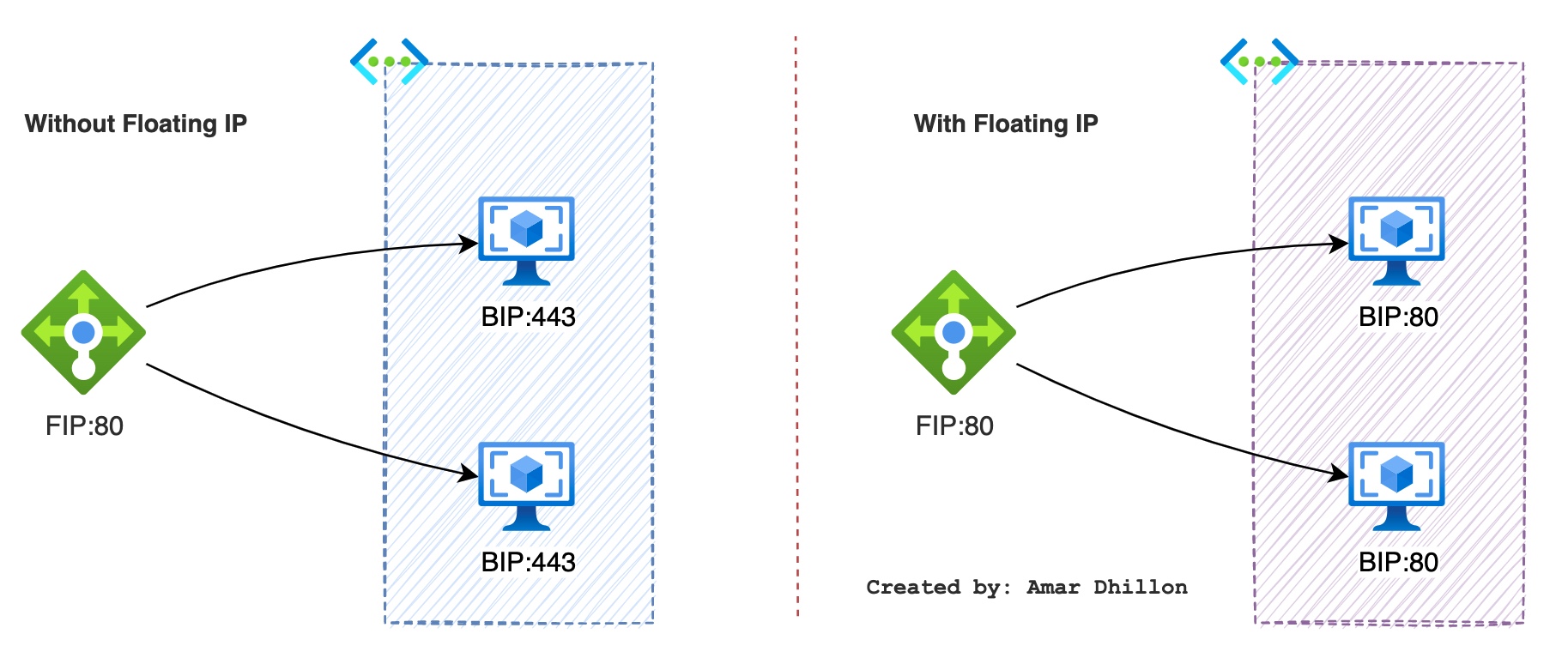

If you want to reuse the backend port across multiple rules, you must enable Floating IP in the load balancing rule definition.

Floating IP is Azure's terminology for a portion of what is known as Direct Server Return (DSR). DSR consists of two parts: a flow topology and an IP address mapping scheme. At a platform level, Azure Load Balancer always operates in a DSR flow topology regardless of whether Floating IP is enabled or not. This means that the outbound part of a flow is always correctly rewritten to flow directly back to the origin.

When Floating IP is enabled, Azure changes the IP address mapping to the Frontend IP address (FIP) of the Load Balancer frontend instead of backend instance's IP (BIP). Without Floating IP, Azure exposes the VM instances' IP. Enabling Floating IP changes the IP address mapping to the Frontend IP of the load Balancer to allow for more flexibility.

Health Probes¶

Azure Load Balancer rules require a health probe to detect the endpoint status. The configuration of the health probe and probe responses determines which backend pool instances will receive new connections.

Why health probe is required?

The purpose of a health probe is to let the load balancer know the status of the application. The health probe will be constantly checking the status of the application using an HTTP or TCP probe. If the application is not responding after a set of consecutive failures, the load balancer will mark the virtual machine as unhealthy. Incoming requests will not be routed to the unhealthy virtual machines. The load balancer will start routing the traffic once the health probe is able to identify that the application is working and is responding to the probe.

How health probe works for HTTP?

In the HTTP probe, the endpoint will be probed every 15 seconds (default value), and if the response is HTTP 200, then it means that the application is healthy. If the application is returning a non-2xx response within the timeout period, the virtual machine will be marked unhealthy.

Hashing/ LB Rules¶

Without the load balancer rules, the traffic that hits the front end will never reach the backend pool

The hash is used to route traffic to healthy backend instances within the backend pool. The algorithm provides stickiness only within a transport session. When the client starts a new session from the same source IP, the source port changes and causes the traffic to go to a different backend instance.

key differences

- 5 tuple (default): Traffic from the same client IP routed to any healthy instance in the

backend pool - 3 tuple: Traffic from the same client IP and protocol is routed to the same backend instance. Its also called

source IP affinity - 2 tuple: Traffic from the same client IP is routed to the same backend instance

5 tuple Hash¶

Azure Load Balancer uses a five tuple hash based distribution mode by default.

The five tuple consists of:

Source IP

Source port

Destination IP

Destination port

Protocol type

3 tuple hash¶

Client IP and protocol (3-tuple) - Specifies that successive requests from the same client IP address and protocol combination will be handled by the same backend instance.

Source IP

Destination IP

Protocol type

2 tuple hash¶

Client IP (2-tuple) - Specifies that successive requests from the same client IP address will be handled by the same backend instance.

Source IP

Destination IP