Storage Accounts ¶

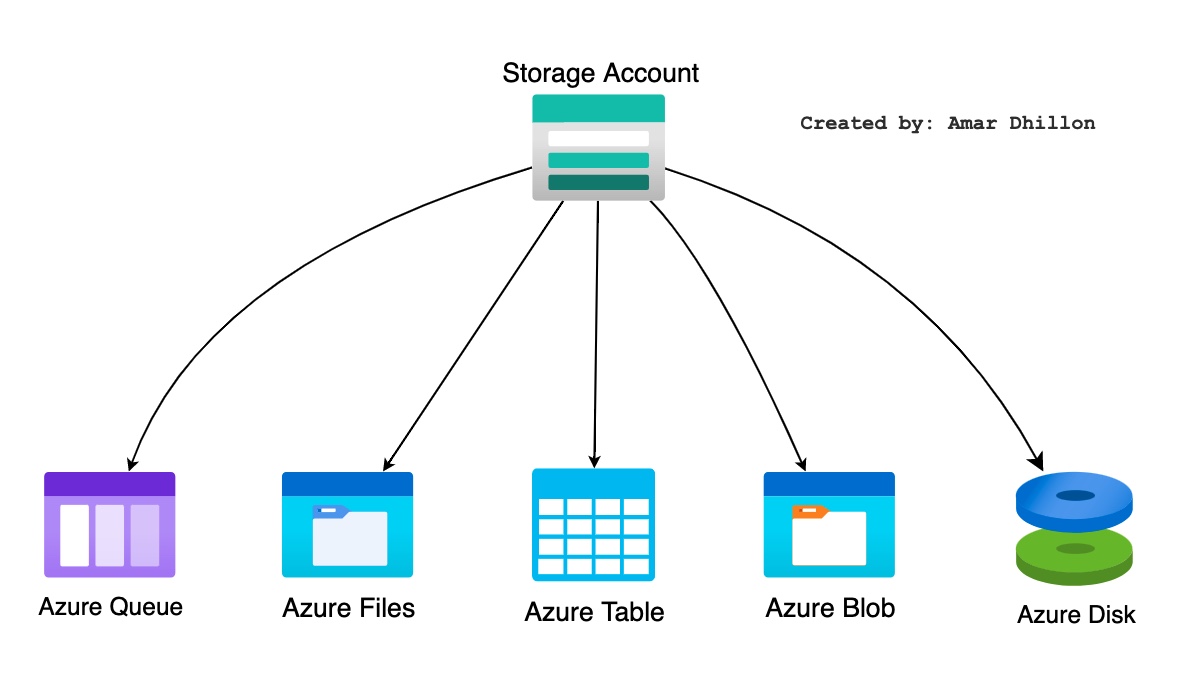

Data objects in Azure Storage are accessible from anywhere in the world over HTTP or HTTPS via a REST API.The Azure Storage platform includes the following data services:

- Azure Blobs: A massively scalable

object store. Also includes support for big data analytics through Data Lake Storage Gen2. - Azure Files: Cloud based file sharing service.This file share can be mounted to multiple VMs or on-premises machines, which is ideal for sharing files across machines.

- Azure Queues: Allows for

asynchronous message queueingbetween application components.Messages can be stored and retrieved using queues. These stored messages can be up to 64 KB in size and can be accessed from anywhere in the world overHTTPorHTTPS. - Azure Tables: A

NoSQL storefor schemaless storage of semi-structured data. Tables is aNoSQL datastorethat is now part ofAzure Cosmos DB. Besides the Table Storage, Cosmos DB offers a new Table API with additional features such as turnkey failover, global distribution, automatic secondary indexes, and throughput optimized tables. - Azure Disk: Azure Disks provides persistent storage to Azure Vm, Azure VMSS etc.

Naming in Storage accounts

The name for the storage account is unique across Azure. Each object stored in the storage account is represented using a unique URL. During the creation of the storage account, you need to pass the name of the storage account to the Azure Resource Manager. Using this storage account name, endpoints are created.

For example, if the name of your storage account is amar_blog_storage, then the default endpoints will be as follows:

- Blobs https://amar_blog_storage.blob.core.windows.net

- Tables https://amar_blog_storage.table.core.windows.net

- Files https://amar_blog_storage.file.core.windows.net

- Queues https://amar_blog_storage.queue.core.windows.net

Things to consider when architecting?

These are things to consider for Azure blob store when doing architecture

- Account type: v1 or v2

- Performance tier: standard or performance

- Replication: LRS, ZRS, ZLRS, GRS

- Access Tier: Hold, cold or archived.

Blob/Disc/File diff¶

-

Azure File Storagemay be mounted as an SMB volume (so that all instances of your app can work with it). Note: This is not something readily supported with Web Apps currently - you'd only be able to write to the file share via API, not via attached disk. Azure File Storage volumes support up to 5TB each, and throughput is max. 60MB/sec across the share. It's backed by Azure blob storage (so, just as durable as blobs). -

Azure Disksare again blob-backed (page blobs), up to 1TB each. Each disk is mountable to a single VM. Throughput is higher than File Storage. Cannot be shared across VMs without your own solution to sync data. Once mounted and formatted, accessible just like any other local file (e.g. no modifications to your app) -

Azure blobs: they can be accessed via REST API/SDK and are not mountable as a disk/drive. Without modifying your app, you'd need to make sure blob contents were copied to local disk to perform operations on the content (you can't just open a blob as a file and modify it).

Storage Account types¶

- General-Purpose v2: This is recommended for most cases. This storage account type provides the blob, file, queue and table service.

General-purpose v2 accountsdeliver the lowest per-gigabyte capacity prices for Azure Storage, as well as industry-competitive transaction prices. - General-purpose v1: this also provides the blob, file, queue and table service but is older version of this account type. General Purpose v1 (GPv1) accounts do not support tiering.

- BlockBlobStorage : this is specifically when you want premium performance for storing block or appending blobs

- FileStorage : This is specifically when you want premium performance for file-ONLY storage

- BlobStorage : This is legacy storage account. Use General-purpose v2 account as much as possible.

SA access types¶

Access keys: These are not preferred as you give whole access.- They provide unlimited access

- They are auto generated

Identity based: Using on-prem AD or Azure AD. They use RBAC instead of keys.SAS tokens: We can provide more granular level details such as access level, dates and IP values.

Azure blob¶

As Azure Blob Storage is for unstructured data, you can store any type of text or binary data. Blob storage is also referred to as object storage

Tip

$logs is the system container that you should not mess with

Blob structure is shown below

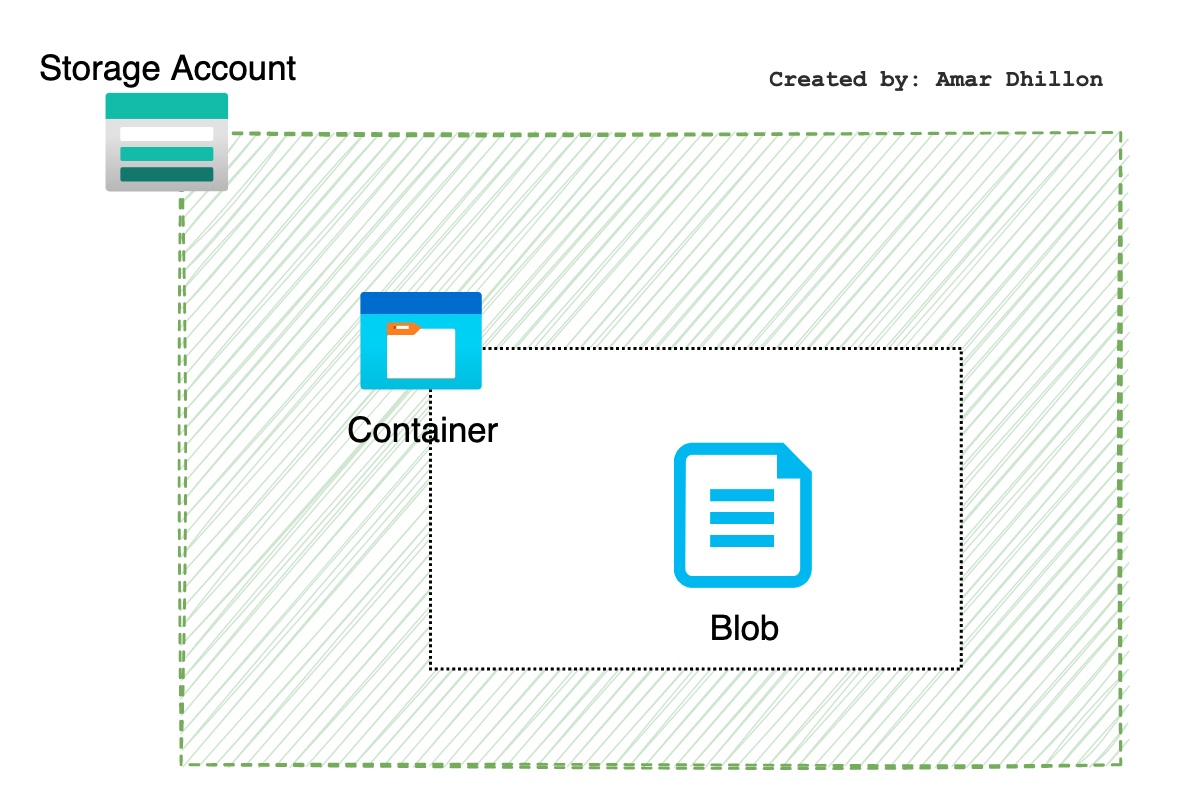

Blob Storage comprises three resources.

Storage account: Used to provide complete access. They are not recommended and should be stored inKey Vault.Containerin the storage accountBlobs/objectsstored in the container

Default access

When you create a container, you need to provide the public access level, which specifies whether you want to expose the data stored in the container publicly. By default, the contents are private

Types of blobs¶

Block¶

For audio and video files

Append blobs¶

For log files

Page blob¶

Used as VM disks

Container/blob visibility¶

We have the following options to control the visibility of container/blob:

- Private: This is the default option; no anonymous access is allowed to

containers and blobs. - Blob (public): This will grant anonymous public read access to the

blobs alone. - Container (public): This will grant anonymous public read and list access to the

container and all the blobs stored inside the container.

Storage Lifecycle¶

Blob Access Tiers¶

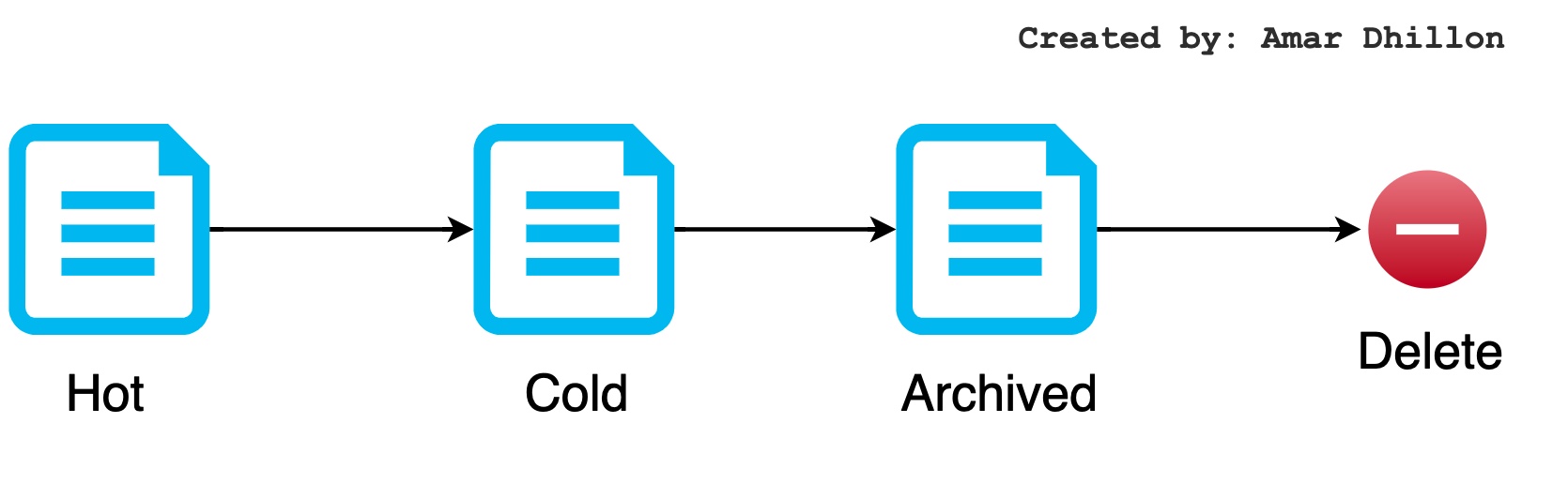

The access tiers are defined based on the usage pattern or frequency of access. When you create a file, it will be accessed frequently; gradually the frequency of access will reduce, and eventually you will not be accessing the file. However, you would still like to keep the file because of the data retention policies and for auditing purposes. Choosing the right access tier will help you optimize the cost of data storage and data access.

Hot (Online)¶

Optimized for frequent access of objects. From a cost perspective, accessing data in the Hot tier is the least expensive compared to the other tiers; however, the data storage costs are higher.When you create a new storage account, this is the default tier.

Cool (Online)¶

Optimized for storing data that is not accessed very frequently and is stored for at least 30 days. Storing data in the Cool tier is cheaper than the Hot tier; however, accessing data in the Cool tier is more expensive than Hot tier.

Archive (Offline)¶

Optimized for storing data that can tolerate hours of retrieval latency and will remain in the Archive tier for at least 180 days. When it comes to storing data, the Archive tier is the most cost-effective tier.

How to access data from Archive tier?

While a blob is in the archive tier, it can't be read or modified. To read or download a blob in the archive tier, you must first rehydrate it to an online tier, either hot or cool. Data in the archive tier can take up to 15 hours to rehydrate, depending on the priority you specify for the rehydration operation.

Only storage accounts that are configured for LRS, GRS, or RA-GRS support moving blobs to the archive tier. The archive tier isn't supported for ZRS, GZRS, or RA-GZRS accounts.

Be careful while choosing access tier

If you are setting up the access tier from the storage account level, you will have only two choices: Hot and Cool. This access tier will be inherited by all objects stored in the storage account. The Archive tier can be set at the individual object level only.

Tiering Policy¶

Azure Storage lifecycle management offers a rule-based policy that you can use to transition blob data to the appropriate access tiers or to expire data at the end of the data lifecycle.

More than 1 policy on same blob

If you define more than one action on the same blob, lifecycle management applies the least expensive action to the blob. For example, action delete is cheaper than action tierToArchive. Action tierToArchive is cheaper than action tierToCool.

Object replication¶

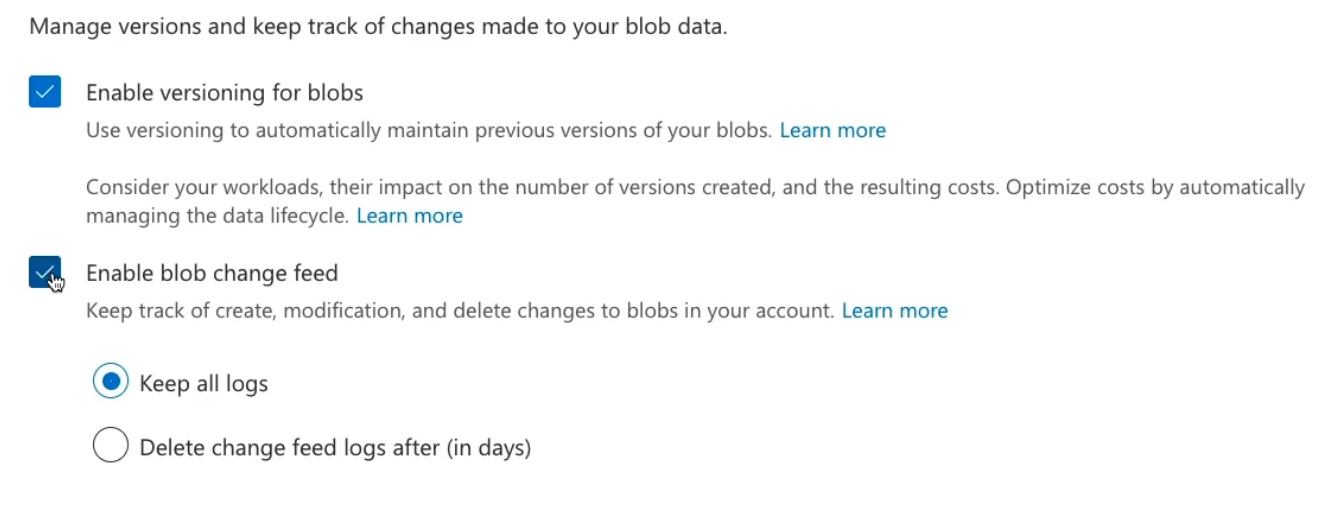

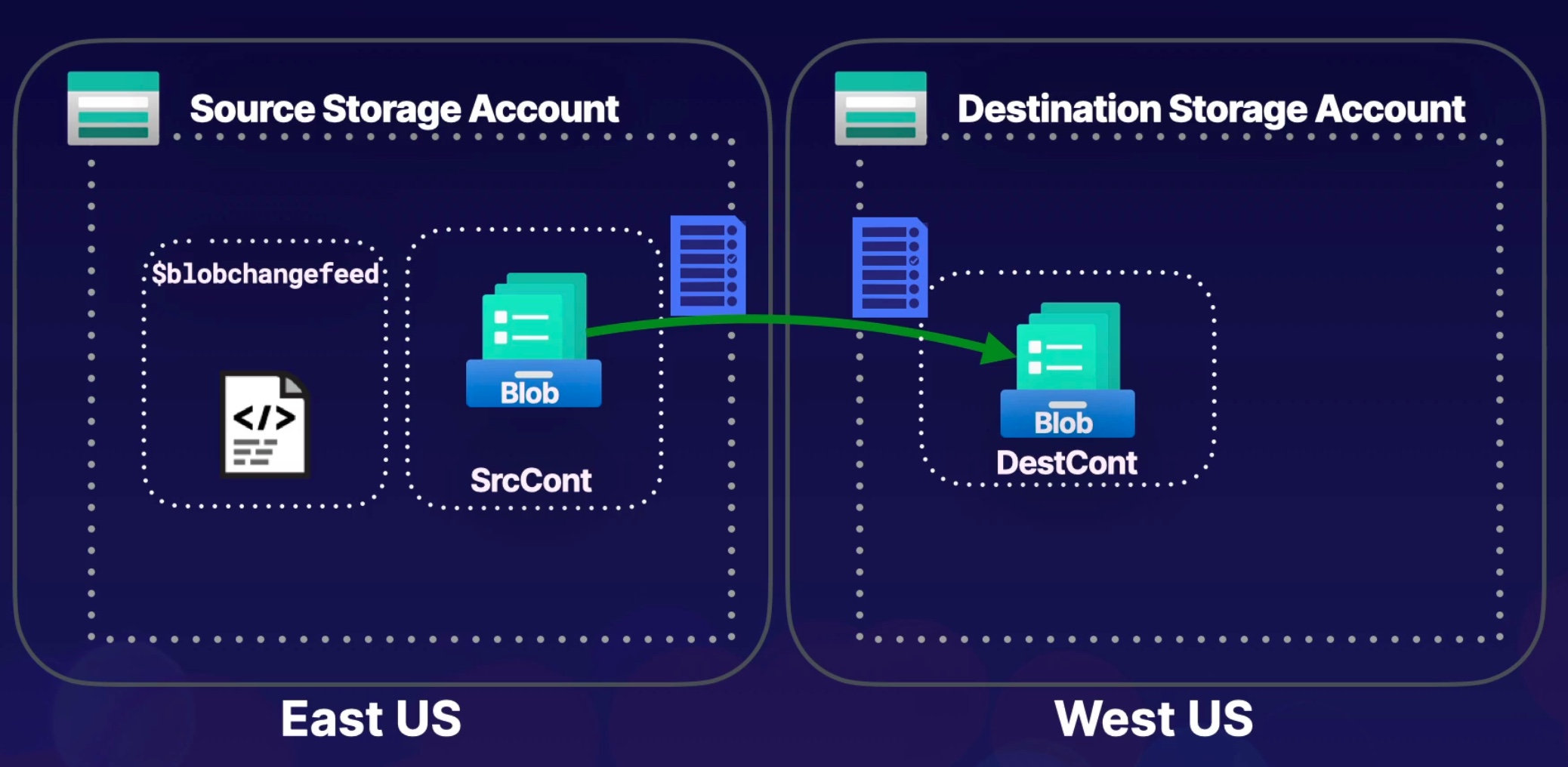

Object replication asynchronously copies block blobs in a container according to rules that you configure. The contents of the blob, any versions associated with the blob, and the blob's metadata and properties are all copied from the source container to the destination container.

Requirements for replication

Object replication requires that the following Azure Storage features to be enabled:

- Change feed: Must be enabled on the source account.

- Blob versioning: Must be enabled on both the source and destination accounts.

The replicaiton process between 2 SA's is shown below

Immutable blob (WORM)¶

Immutable storage for Azure Blob Storage enables users to store business-critical data in a WORM (Write Once, Read Many) state. While in a WORM state, data cannot be modified or deleted for a user-specified interval. By configuring immutability policies for blob data, you can protect your data from overwrites and deletes.

Immutable storage for Azure Blob Storage supports 2 types of immutability policies:

- Time-based retention policies: With a time-based retention policy, users can set policies to store data for a specified interval. When a time-based retention policy is set, objects can be created and read, but not modified or deleted. After the retention period has expired, objects can be deleted but not overwritten.

- Legal hold policies: A legal hold stores immutable data until the legal hold is explicitly cleared. When a legal hold is set, objects can be created and read, but not modified or deleted.

Encryption scopes¶

Encryption scopes enable you to manage encryption with a key that is scoped to a container or an individual blob. You can use encryption scopes to create secure boundaries between data that resides in the same storage account but belongs to different customers.

How encryption scopes work?

By default, a storage account is encrypted with a key that is scoped to the entire storage account. When you define an encryption scope, you specify a key that may be scoped to a container or an individual blob.

When the encryption scope is applied to a blob, the blob is encrypted with that key. When the encryption scope is applied to a container, it serves as the default scope for blobs in that container, so that all blobs that are uploaded to that container may be encrypted with the same key.

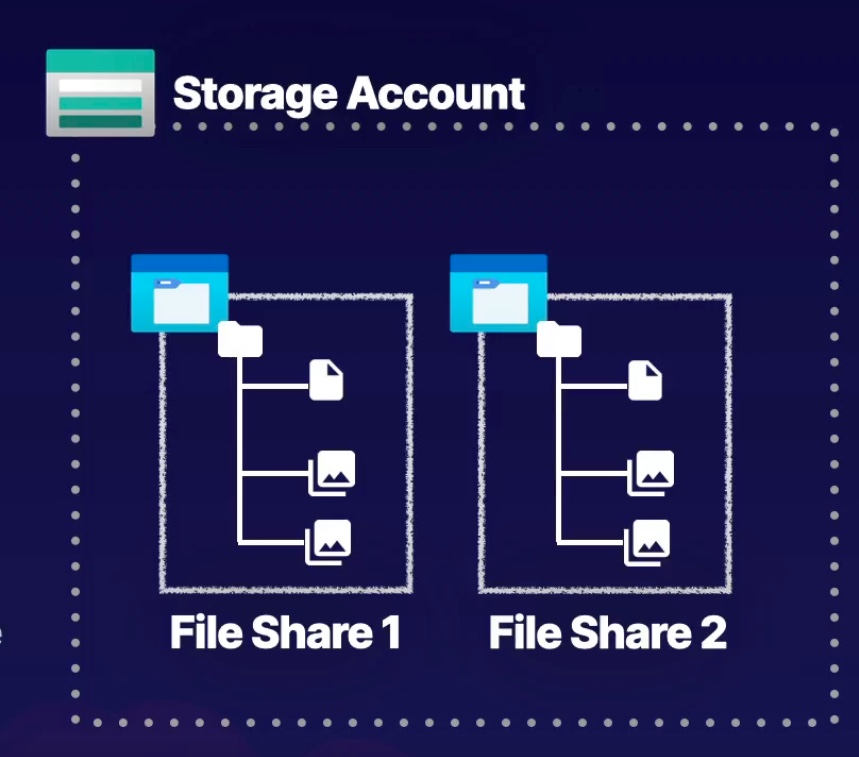

Azure File¶

It has hierarchical file structure

## Generic structure

<storageAccountName>.file.core.windows.net\<fileShareName>

# if storageAccountName == amarTestStorage and fileShareName == dataFile01, then URI is

amarTestStorage.file.core.windows.net\dataFile01

Difference between Azure File and Azure Blob?

- Azure Blobs uses a

flat namespacethat includes containers and objects. Azure Files usesdirectory objectsas you have seen with our traditional file shares. - Azure Blobs is

accessed via containers, and Azure Files isaccessed through file shares. - Azure Blobs is accessed via an HTTP/HTTPS connection, and Azure Files is

accessed via the SMB/NFS protocolwhen mounted to a virtual machine. - Azure Blobs doesn’t need to be mounted and can be accessed directly from any client that supports HTTP calls. Azure Files needs to be mounted to virtual machines before working with the data. On a side note, you can still manage the files in Azure Files via tools like the Azure portal and Azure Storage Explorer without the need to mount it.

Azure File Share¶

File shares are not something new; you still have on-premises file shares that serve your enterprise needs and requirements. Azure File Share is a cloud-based file share that enables you to access the file share from any computer anywhere in the world.

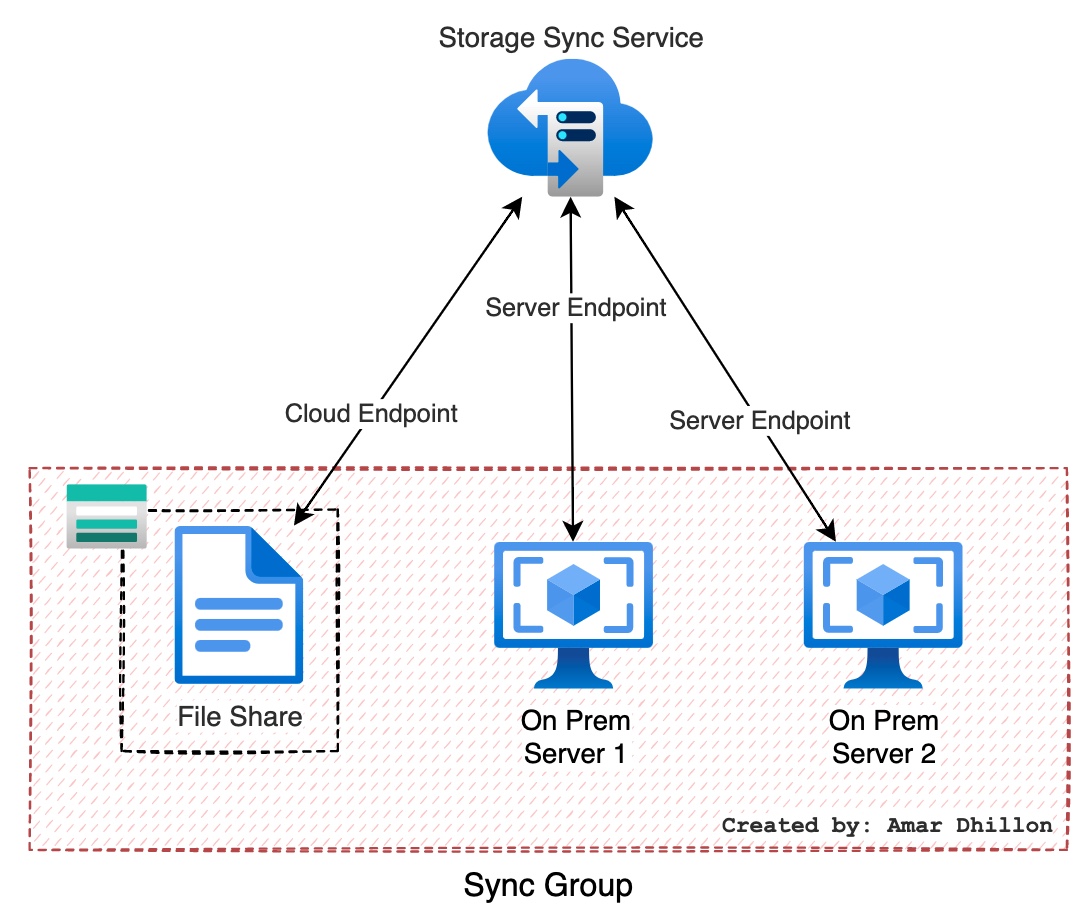

Azure File Sync¶

With Azure File Sync, you will be using Azure Files as a centralized location for storing files. In simple words, you can synchronize the files you have on-premises with Azure File Share.

An Azure File Sync deployment has these components:

- Azure file share: An Azure file share is a serverless cloud file share, which provides the

cloud endpointof an Azure File Sync relationship. - Server endpoint: The path on the

Windows Serverthat is being synced to an Azure file share. This can be a specific folder on a volume or the root of the volume. Multiple server endpoints can exist on the same volume if their namespaces do not overlap (as shown in the figure) - Sync group: The object that defines the sync relationship between a cloud endpoint, or Azure file share, and a server endpoint. Endpoints within a sync group are kept in sync with each other. If for example, you have two distinct sets of files that you want to manage with Azure File Sync, you would create two sync groups and add different endpoints to each sync group.

File Share steps¶

Steps to synchronize the files in the file share named data to an on-premises server named Server1

-

Step 1:

Install the Azure File Sync agent on Server1- The Azure File Sync agent is a downloadable package that enables Windows Server to be synced with an Azure file share. -

Step 2:

Register Server1- Register Windows Server with Storage Sync Service. Registering your Windows Server with a Storage Sync Service establishes a trust relationship between your server and the Storage Sync Service. -

Step 3:

Create a sync group and a cloud endpoint- A sync group defines the sync topology for a set of files. Endpoints within a sync group are kept in sync with each other. A sync group must contain one cloud, which represents an Azure file share and one or more server endpoints. A server endpoint represents a path on registered server.

Storage Security¶

Remember

You can control fine-grained access to data objects using SAS token instead of using access keys as you can define time-bound access.

Authorization options¶

The following are the authorization options available to Azure Storage:

Azure AD: Using Azure AD: you can authorize access to Azure Storage via role-based access control (RBAC). With RBAC, you can assign fine-grained access to users, groups, or applications.Shared Key: Every storage account has two keys: primary and secondary. The access keys of the storage account will be used in the Authorization header of the API calls.Shared Access Signatures(SAS), you can limit access to services with specified permissions and over a specified timeframe.Anonymous Access to Containers and Blobs: If you set the access level to blob or container, you can have public access to the objects without the need to pass keys or grant authorization.

AzCopy¶

AzCopy is a next-generation command-line tool for copying data from or to Azure Blob and Azure Files.

Behind the scenes, Azure Storage Explorer uses AzCopy to accomplish all the data transfer operations.

With AzCopy you can copy data in the following scenarios:

- Copy data from a local machine to Azure Blobs or Azure Files

- Copy data from Azure Blobs or Azure Files to a local machine

- Copy data between storage accounts

Custom Domain Configuration¶

Accessing the service using the default domain may be hectic, and customers would like to use their own custom domains to represent their storage space.