K8S Security¶

AuthT¶

- we define who can access.

- For machines, we can create

Service Accounts - K8s does not create/manage user accounts but it does for service accounts

AuthN¶

- Using RBAC

Various Authorization methods are

AlwaysAllow¶

Allows all calls

AlwaysDeny¶

Deny all calls

ABAC¶

Attribute-based access control (ABAC) defines an access control paradigm whereby access rights are granted to users through the use of policies which combine attributes together.

RBAC¶

Role-based access control (RBAC) is a method of regulating access to computer or network resources based on the roles of individual users within your organization. The RBAC API declares four kinds of Kubernetes object:

- Role

- ClusterRole

- RoleBinding

- ClusterRoleBinding

Role is Namespaced but not ClusterRole

A Role always sets permissions within a particular namespace; when you create a Role, you have to specify the namespace it belongs in.

ClusterRole, by contrast, is a non-namespaced resource. The resources have different names (Role and ClusterRole) because a Kubernetes object always has to be either namespaced or not namespaced; it can't be both.

Role¶

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

namespace: default

name: pod-reader

rules:

- apiGroups: [""] # "" indicates the core API group

resources: ["pods"]

verbs: ["get", "watch", "list"]

Rolebinding¶

apiVersion: rbac.authorization.k8s.io/v1

# This role binding allows "amar" to read pods in the "default" namespace.

# You need to already have a Role named "pod-reader" in that namespace.

kind: RoleBinding

metadata:

name: read-pods

namespace: default

subjects:

# You can specify more than one "subject"

- kind: User

name: amar # "name" is case-sensitive

apiGroup: rbac.authorization.k8s.io

roleRef:

# "roleRef" specifies the binding to a Role / ClusterRole

kind: Role #this must be Role or ClusterRole

name: pod-reader # this must match the name of the Role or ClusterRole you wish to bind to

apiGroup: rbac.authorization.k8s.io

ClusterRole¶

ClusterRolebinding¶

Node Authorizer¶

The requests from kubelet are handled by Node Authorizer. Node authorization is a special-purpose authorization mode that specifically authorizes API requests made by kubelets.

Note

They are used for access within the cluster

Webhook¶

Used to manage it externally using Open Policy Agent for example

TLS certificates¶

An SSL/TLS certificate is a digital object that allows systems to verify the identity & subsequently establish an encrypted network connection to another system using the Secure Sockets Layer/Transport Layer Security (SSL/TLS) protocol. Certificates are used within a cryptographic system known as a Public Key Infrastructure (PKI).

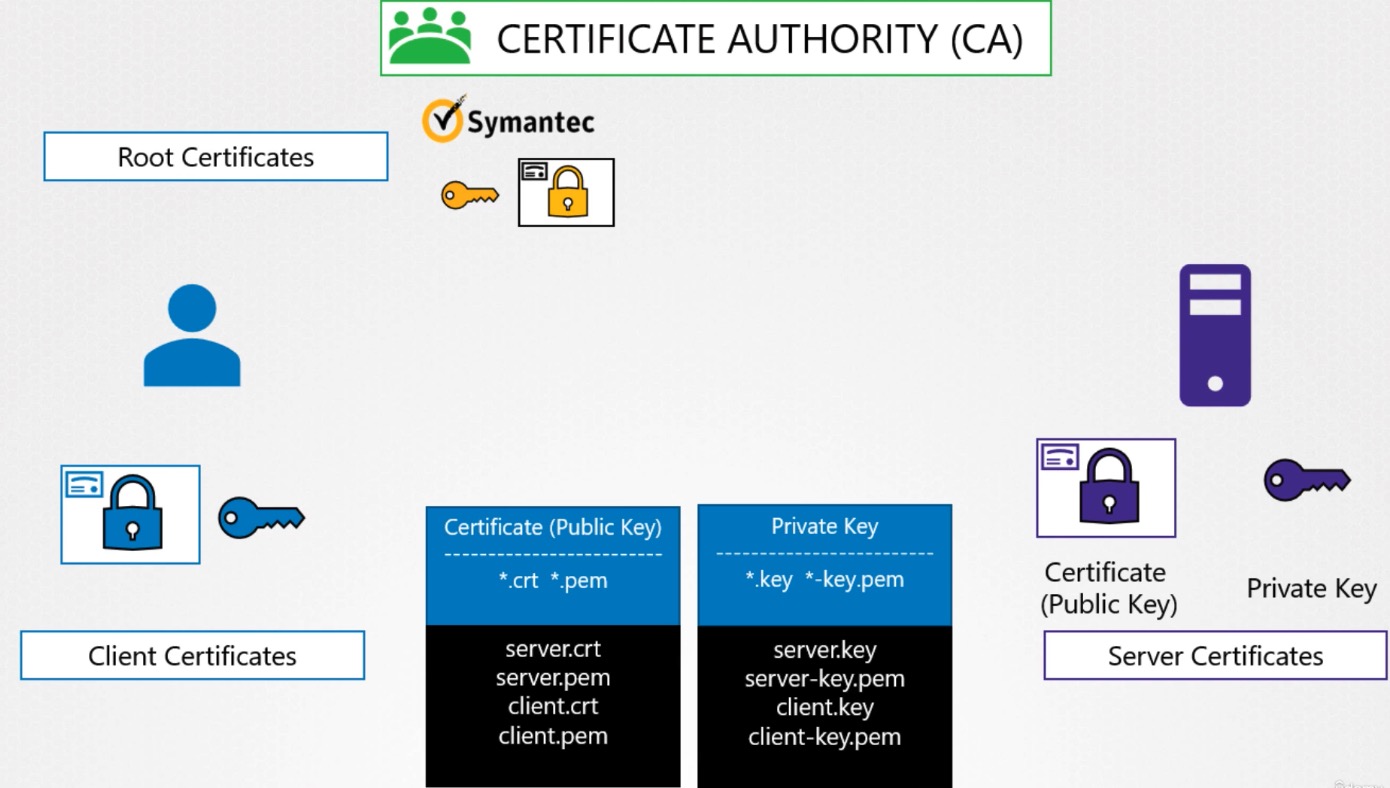

PKI provides a way for one party to establish the identity of another party using certificates if they both trust a third-party - known as a certificate authority.

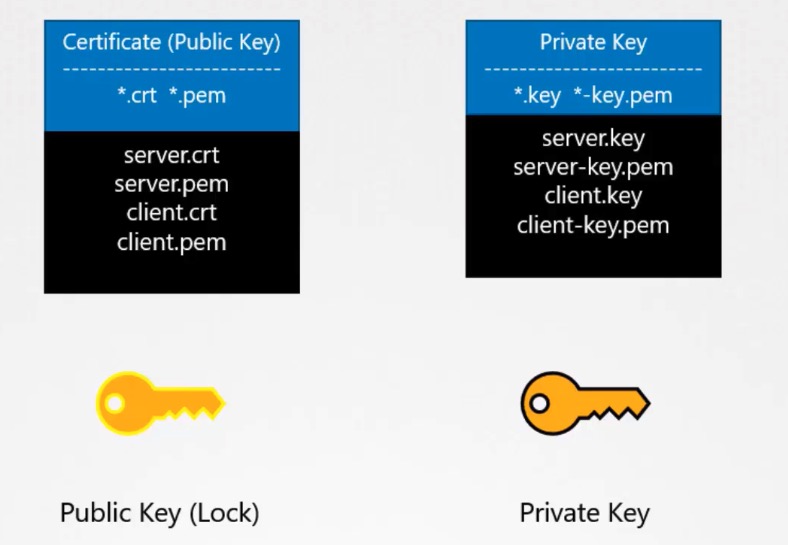

The format for public and private keys are shown below:

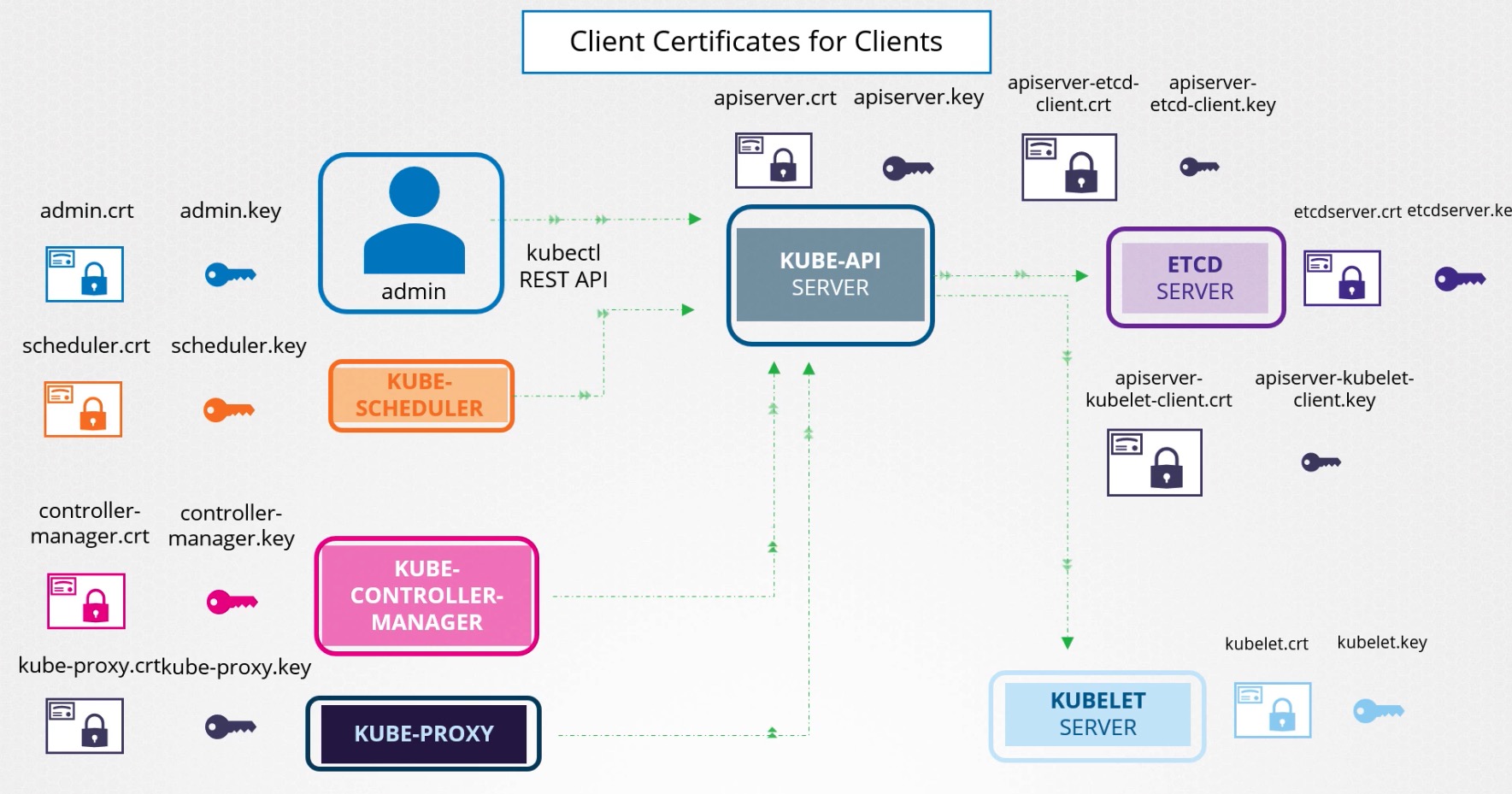

Here are the various kinds of certs involved - client certificate - Server certificate - Root certificate (CA)

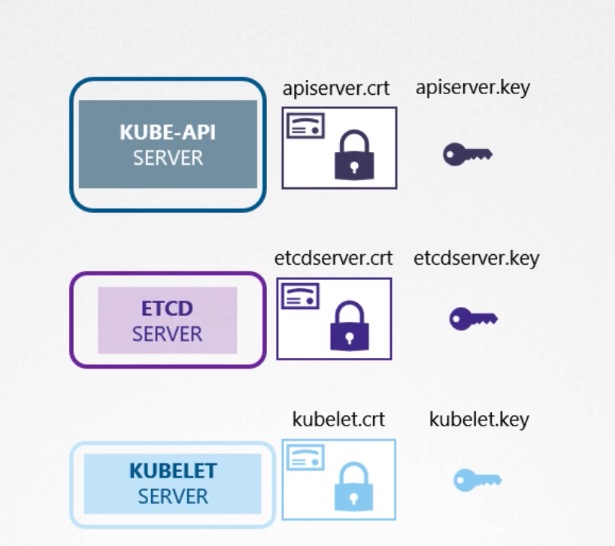

Various keys used by server in K8s are

Put it all together

View/Approve CSR's¶

# View CSR's using

k get csr

# See more details for CSR

k get csr <amar-dhillon> -o yaml

# Approve the CSR using

k certificate approve <certificate-name>

# Deny CSR using

kubectl certificate deny <certificate-name>

# Delete CSR using

kubectl delete csr <certificate-name>

controlplane ~ ➜ k delete csr agent-smith

certificatesigningrequest.certificates.k8s.io "agent-smith" deleted

View Certificates in K8s¶

run the below command

cat /etc/kubernetes/manifests/kube-apiserver.yaml

# look for the below `--tls-cert-file` line

Below is the example to see cert file used by Kube API Server

controlplane ~ ➜ cat /etc/kubernetes/manifests/kube-apiserver.yaml | grep -i tls

- --tls-cert-file=/etc/kubernetes/pki/apiserver.crt

- --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

controlplane ~ ➜ cat /etc/kubernetes/manifests/kube-apiserver.yaml | grep -i etcd

- --etcd-cafile=/etc/kubernetes/pki/etcd/ca.crt

- --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt

- --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key

- --etcd-servers=https://127.0.0.1:2379

# Look for the certs in the api-server file

controlplane ~ ➜ cat /etc/kubernetes/manifests/kube-apiserver.yaml | grep -i kubelet

- --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt

- --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

# Look for the certs in the etcd `cert-file`

controlplane ~ ➜ cat /etc/kubernetes/manifests/etcd.yaml | grep cert

- --cert-file=/etc/kubernetes/pki/etcd/server.crt

- --client-cert-auth=true

- --peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt

- --peer-client-cert-auth=true

name: etcd-certs

name: etcd-certs

Warning

ETCD can have its own CA. So this may be a different CA certificate than the one used by kube-api server.

# Cert file is shown below as `peer-trusted-ca-file` property

controlplane ~ ➜ cat /etc/kubernetes/manifests/etcd.yaml | grep ca

- --peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt

- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt

priorityClassName: system-node-critical

# check for issuer in this command

openssl x509 -in /etc/kubernetes/pki/apiserver.crt -text

# Look at Alternative Names.

openssl x509 -in /etc/kubernetes/pki/apiserver.crt -text

# check for Subject CN here

openssl x509 -in /etc/kubernetes/pki/etcd/server.crt --text

# Check expiry date in this file

openssl x509 -in /etc/kubernetes/pki/apiserver.crt --text

Check Kube API Server logs¶

Run crictl ps -a command to identify the kube-api server container. Run crictl logs container-id command to view the logs.

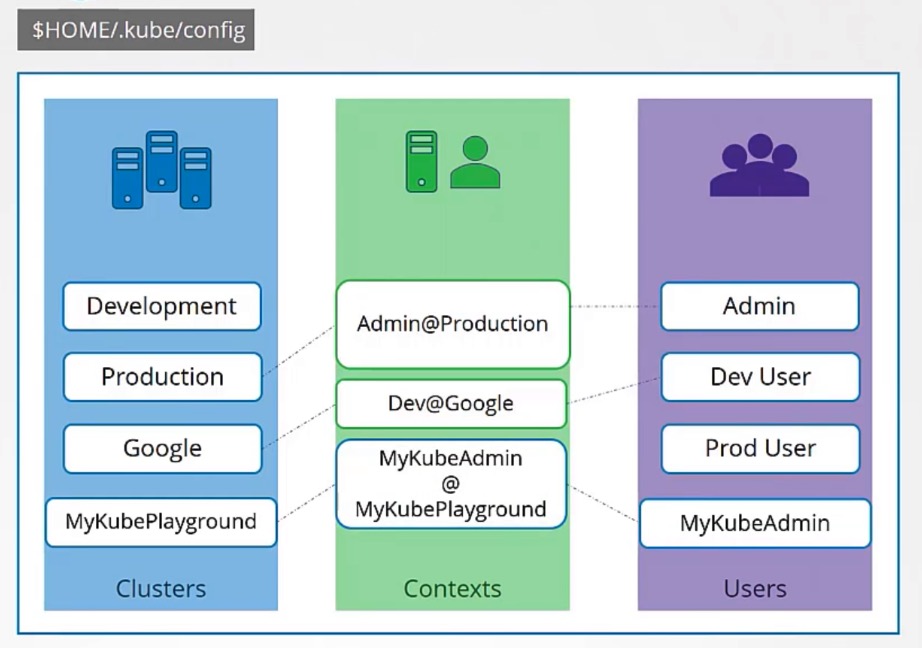

Kubeconfig¶

A Kubeconfig is a YAML file with all the Kubernetes cluster details, certificates, and secret token to authenticate the cluster. You might get this config file directly from the cluster administrator or from a cloud platform if you are using managed Kubernetes cluster.

~/.kube/config

kubectl config --kubeconfig=/root/my-kube-config current-context

update context¶

kubectl config --kubeconfig=/root/my-kube-config use-context research

# if using the default context file, then

kubectl config use-context <amar-dev-cluster>

Using the Kubeconfig File With Kubectl¶

You can pass the Kubeconfig file with the Kubectl command to override the current context and KUBECONFIG env variable.

kubectl get nodes --kubeconfig=$HOME/.kube/dev_cluster_config

Network Policy¶

- By default all pods can talk to one another.

- NetworkPolicies are an application-centric construct which allow you to specify how a pod is allowed to communicate with various network "entities"

- The entities that a Pod can communicate with are identified through a combination of the following 3 identifiers:

Other podsthat are allowed (exception: a pod cannot block access to itself)Namespacesthat are allowedIP blocks(exception: traffic to and from the node where a Pod is running is always allowed, regardless of the IP address of the Pod or the node)

How to grant access?

When defining a pod- or namespace- based NetworkPolicy, you use a selector to specify what traffic is allowed to and from the Pod(s) that match the selector. Meanwhile, when IP based NetworkPolicies are created, we define policies based on IP blocks (CIDR ranges).

A sample Network policy is shown below

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: internal-policy

namespace: default

spec:

podSelector:

matchLabels:

name: internal

policyTypes:

- Egress

egress:

- to:

- podSelector:

matchLabels:

name: payroll

ports:

- protocol: TCP

port: 8080

- to:

- podSelector:

matchLabels:

name: mysql

ports:

- protocol: TCP

port: 3306